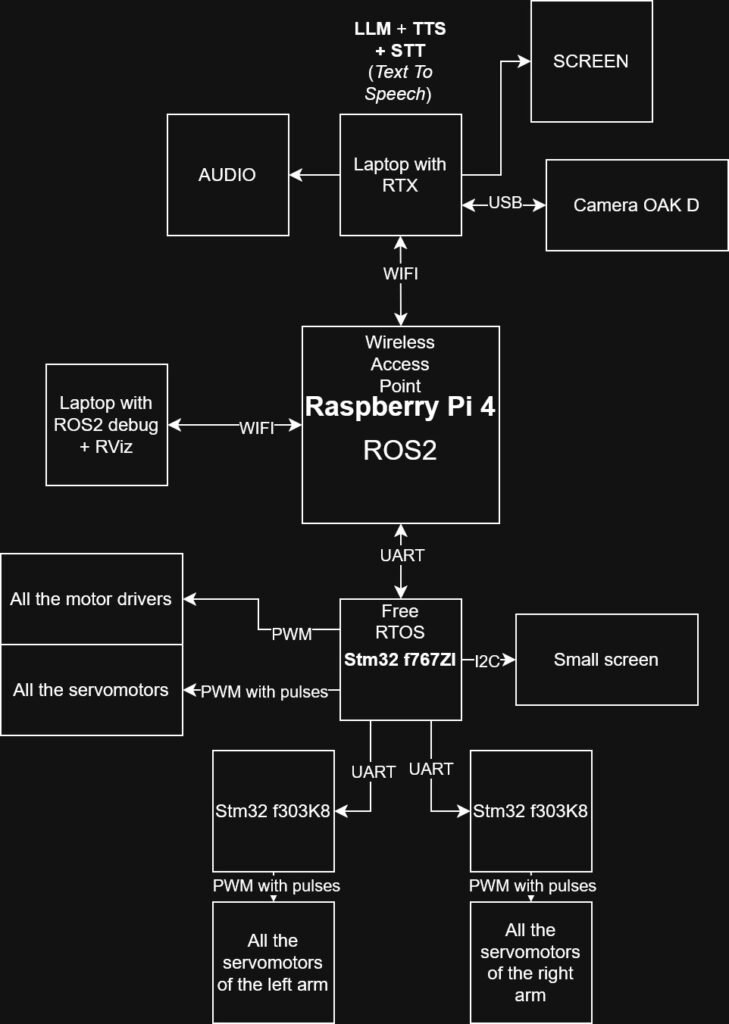

System Architecture Overview

-The system is built around a distributed architecture combining AI processing, vision, and embedded control:

-A laptop with RTX GPU runs the LLM Qwen2.5 7B Instruct using Ollama as the runtime.

-The AI generates structured JSON responses in the format:

“person_id”: “<string>”,

“text”: “<string>”,

“robot_code”: {

“pose”: “<enum>”,

“expression”: “<enum>”,

“gesture”: “<enum>”

}

A portion of this JSON output is transmitted to a Raspberry Pi 4 B running ROS 2, which handles communication and high-level robot control.

The Raspberry Pi then sends motion and behavior commands to an STM32F767ZI, responsible for:

controlling the servo motors,

communicating with additional STM32 microcontrollers,

implementing safety mechanisms (watchdog, motion limits, and failsafe logic).

Vision and Identification Pipeline

The RTX laptop also runs an OAK camera for:

Face detection in 3D coordinates (X, Y, Z)

Person identification (ID recognition)

The detected person ID is sent to the LLM for contextual interaction.

The 3D coordinates are transmitted directly to the Raspberry Pi for robotic orientation and tracking.

Current Implementation Status

At this stage:

The LLM Qwen2.5 7B runs successfully and responds instantly.

The OAK camera system detects faces, extracts XYZ coordinates, identifies individuals, and updates its internal database.